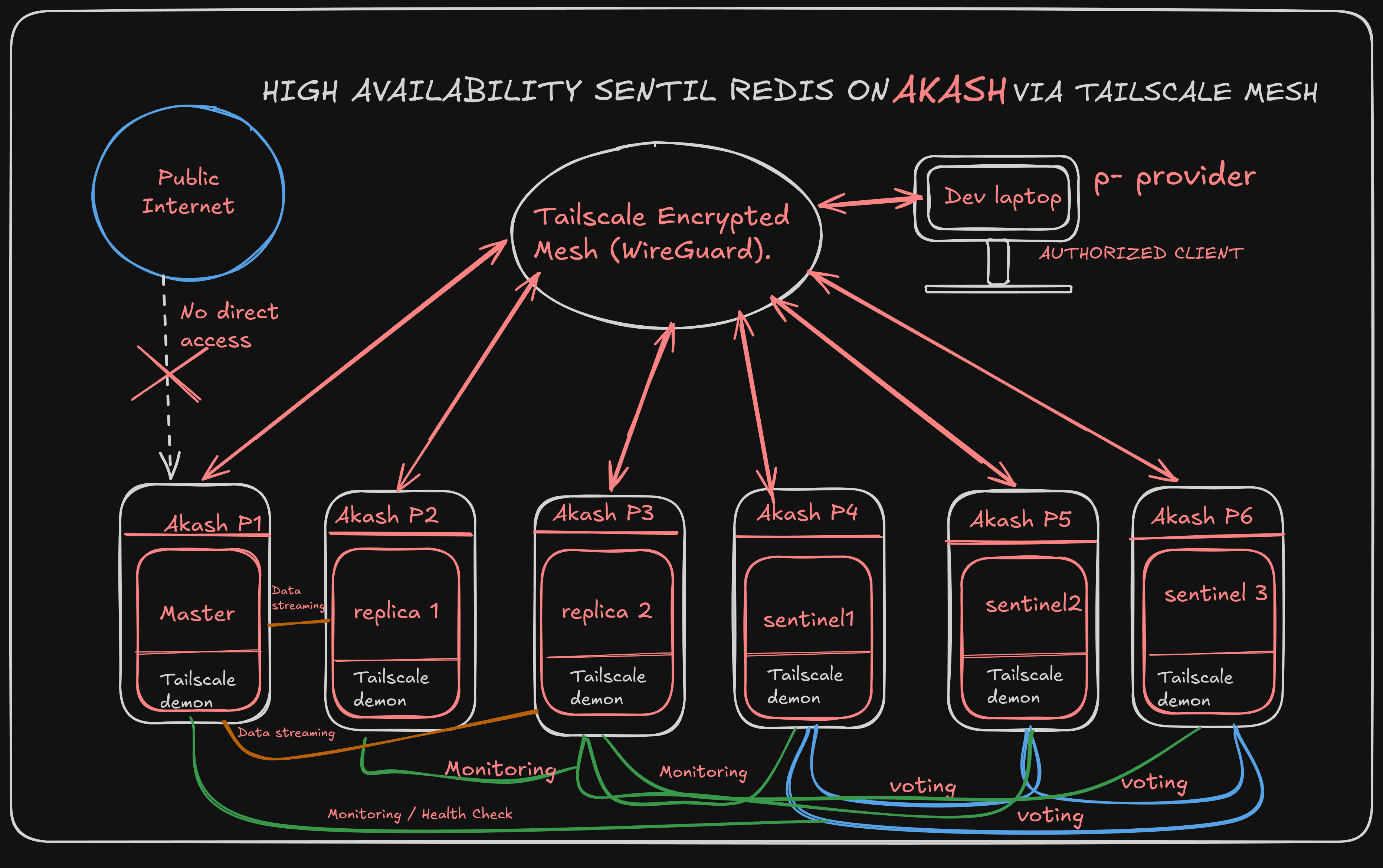

Private Overlay Networking on Akash: Connecting Independent Providers with Tailscale and DERP

Akash Is Built for This:

One of the most powerful things about Akash Network is that it lets you deploy workloads across a global, decentralized marketplace of providers — each bringing their own hardware, location, and competitive pricing to the table. This diversity is a feature: it gives you geographic redundancy, cost flexibility, and true decentralization that no single cloud provider can match.

As you start building more sophisticated systems on Akash — distributed backends, AI inference pipelines, multi-region applications — a natural question comes up:

How do my workloads, running on different providers, talk to each other securely?

This is a real architectural challenge in any decentralized compute environment. Workloads on different providers exist in separate network namespaces. There are no guaranteed private subnets between providers. And for certain workloads like internal APIs, databases, model servers, job queues , you want communication to happen over a private channel, not the public internet

This guide walks through a clean, production-ready solution: building a private encrypted mesh network across your Akash deployments using Tailscale. It's lightweight, zero-configuration, and it runs entirely inside your containers.

What Is Tailscale?

Tailscale is a mesh VPN built on WireGuard — the same cryptographic protocol trusted by major cloud providers and security researchers worldwide. Every device that joins a Tailscale network (called a "Tailnet") gets a stable private IP address in the 100.x.x.x range, and encrypted peer-to-peer tunnels form automatically between nodes — even across NAT, firewalls, and different cloud environments.

For Akash deployments specifically, Tailscale's userspace networking mode (--tun=userspace-networking) is the key. It runs the WireGuard tunnel entirely in userspace, requiring no kernel-level TUN device — which means it works inside containers without any special privileges.

The result: your Akash workloads can form their own private network, no matter which providers they're running on.

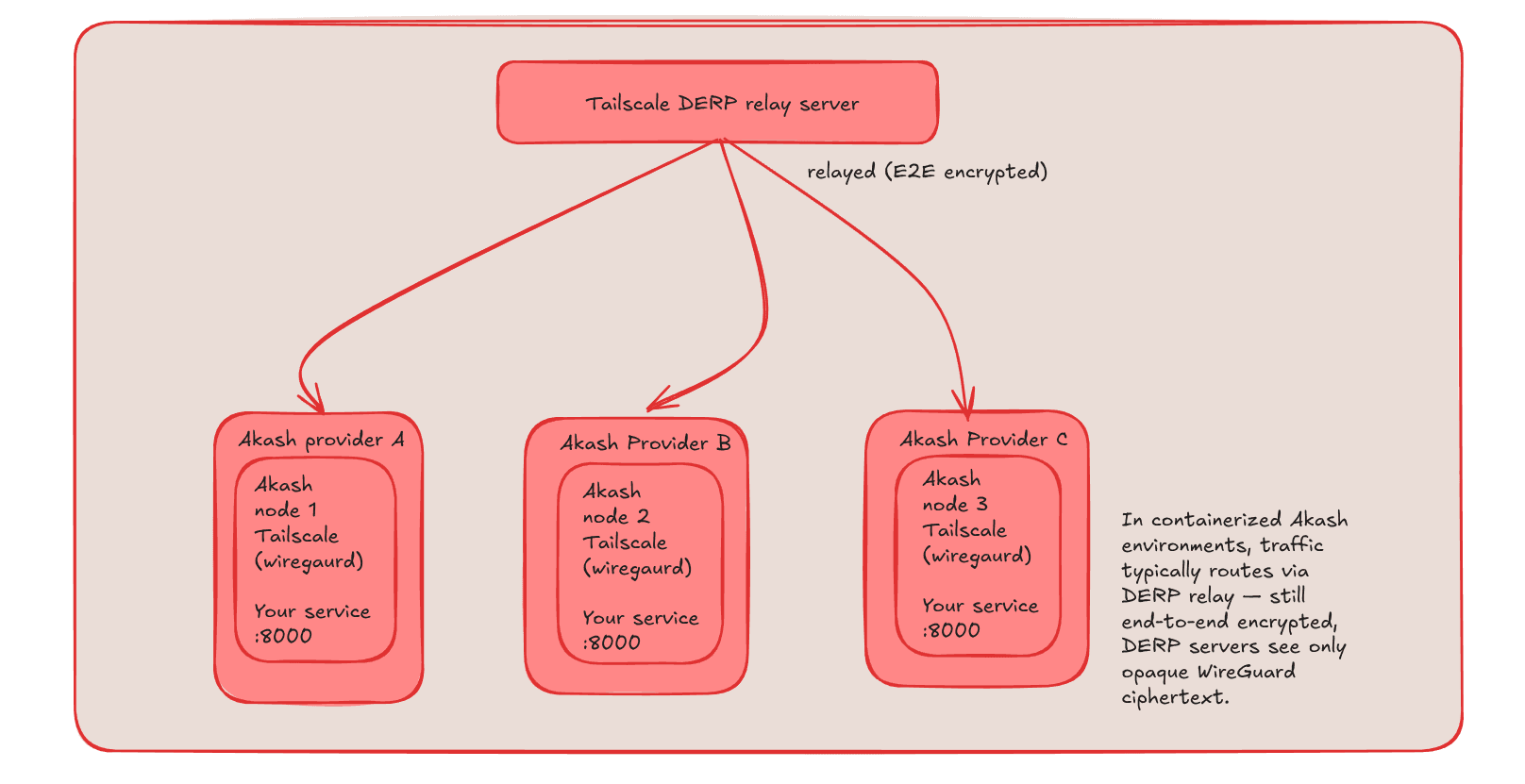

How DERP Works

DERP servers are Tailscale's globally distributed relay network. When two nodes can't connect directly, their traffic is relayed through the nearest DERP server. Critically, the relay is end-to-end encrypted — DERP servers forward opaque WireGuard packets and have no ability to read the contents. The encryption keys never leave your nodes.

The result: your Akash workloads can form their own private encrypted network across providers, with traffic relayed through DERP when direct connections aren't available.

The Architecture

The pattern is straightforward. Each container:

Starts the Tailscale daemon in userspace mode

Joins your private Tailnet using an auth key

Runs your actual service bound to

127.0.0.1

Once all containers have joined the Tailnet, they can reach each other using their stable Tailscale IPs (100.x.x.x) — with end-to-end WireGuard encryption. Your internal service traffic stays inside the mesh and never needs to touch the public internet.

Get started

Generating a Tailscale Auth Key

Log in to the Tailscale Admin Console

Go to Settings → Keys

Click Generate auth key

Select Reusable so multiple deployments can join using the same key

Copy the key — it will look like

tskey-auth-xxxx...

The SDL: Three Nodes, 3 Providers

Here's a set of three Akash SDLs that each spin up a container, join a private Tailnet, and run a small HTTP server accessible only within the mesh. You'd deploy each one separately to get workloads distributed across providers.

version: "2.0"

services:

backend:

image: alpine:latest

expose:

- port: 8000

as: 8000

to:

- global: true

env:

- TS_AUTHKEY=tskey-auth-YOUR_KEY_HERE

- TS_HOSTNAME=akash-server-1

command:

- /bin/sh

- "-c"

args:

- |

apk add tailscale python3

# Start Tailscale in userspace mode (works in containers, no kernel TUN needed)

tailscaled --tun=userspace-networking --socks5-server=localhost:1055 &

sleep 5

# Join the private Tailnet

tailscale up \

--authkey=$TS_AUTHKEY \

--hostname=$TS_HOSTNAME \

--reset \

--accept-dns=false

# Start the service — bound to loopback, reachable via Tailscale mesh

echo "Hello from $TS_HOSTNAME" > index.html

python3 -m http.server 8000 --bind 127.0.0.1

profiles:

compute:

backend:

resources:

cpu:

units: 0.5

memory:

size: 512Mi

storage:

size: 512Mi

placement:

dcloud:

pricing:

backend:

denom: ibc/170C677610AC31DF0904FFE09CD3B5C657492170E7E52372E48756B71E56F2F1

amount: 1000

signedBy:

anyOf:

- akash1365yvmc4s7awdyj3n2sav7xfx76adc6dnmlx63

allOf: []

deployment:

backend:

dcloud:

profile: backend

count: 1

Node 2 — same SDL, change TS_HOSTNAME to akash-server-2

Node 3 — same SDL, change TS_HOSTNAME to akash-server-3

Once all three are deployed and running, open your Tailscale admin console. You'll see all three nodes listed with their 100.x.x.x private IPs, connected to each other.

With all nodes in the same Tailnet, inter-node communication is simple:

option : 1 through ip

curl -x socks5h://127.0.0.1:1055 http://100.90.158.125:8000

option :2 through host name

curl -x socks5h://127.0.0.1:1055 http://akash-server-1:8000

Because Akash containers sit behind NAT and don't own their network interfaces, Tailscale will typically route this traffic through the nearest DERP relay server rather than a direct P2P connection. The DERP server forwards opaque WireGuard ciphertext — it cannot read the contents. Your data stays end-to-end encrypted the whole way.

Real-World Use Cases

This pattern unlocks a range of distributed architectures that weren't straightforward to build on Akash before:

Distributed AI Inference

Run a coordinator node and multiple GPU worker nodes across different providers. The coordinator distributes inference jobs over the private mesh. Workers respond with results. No request payload is exposed to the public internet.

Internal APIs and Microservices

Build a microservices architecture where each service runs on the most cost-effective provider for its workload type. Service-to-service calls happen over the encrypted mesh. Only your public-facing gateway exposes a port to the world.

Private Databases

Run PostgreSQL or Redis bound to loopback. Only nodes in your Tailnet — authorized by ACL — can connect. Your database never appears on a port scan.

Multi-Region Job Queues

Distribute workers across geographic providers for latency-optimized job processing. Workers pull tasks from a coordinator over the mesh, results are returned the same way.

Staging Environments

Spin up an isolated Tailnet for a staging environment. Your staging services are completely unreachable from the outside world and from your production Tailnet — by design.

Conclusion:

This Tailscale pattern is specifically for the subset of workloads that have internal communication requirements — where services need to talk to each other and that communication is inherently private by design, the same way microservices in a Kubernetes cluster communicate over an internal network rather than the public internet. It doesn't change how you pay for compute, how providers are selected, or how the Akash marketplace works. Providers still get their bids, still run your containers, and still get paid.

Think of it as the Akash equivalent of Kubernetes' internal cluster networking — a pattern for building sophisticated distributed systems on top of the compute layer that Akash providers so reliably deliver.